Comprendre comment les ménages réagissent à la perte d’un emploi : un éclairage à partir des travaux de Lionel Wilner

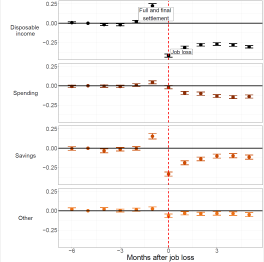

Lionel Wilner, chercheur au CREST et enseignant à l’ENSAE Paris, s’est intéressé aux épisodes de chômage dans une récente étude INSEE co-écrite avec Odran Bonnet (INSEE), François Le Grand (Rennes School of Business), Tom Olivia (INSEE) et Xavier Ragot (Sciences Po, CNRS, OFCE) intitulée The Consumption Response to Unemployment: Evidence from French Bank Account Data. Ce document de travail explore comment les ménages réagissent à la perte d’un emploi, sur le plan financier, en analysant des données bancaires portant sur plus de 6 500 foyers percevant des indemnités chômage.

Une méthodologie novatrice

Cette recherche, qui s’appuie sur des données à haute fréquence issues de comptes bancaires, examine comment les ménages ajustent leurs dépenses et utilisent leur épargne pour faire face à un choc de chômage. Les résultats montrent que sur les six premiers mois suivant une perte d’emploi, 36 % de la baisse de revenu est compensée par une réduction des dépenses, tandis que le reste est principalement comblé par la mobilisation de l’épargne liquide.

Retombée dans la presse et perspectives pour les politiques publiques

Ces travaux apportent un éclairage sur les marges d’adaptation des ménages et ont des implications sur la conception d’une assurance chômage optimale. L’étude a récemment été mise en lumière par Les Échos (édition du 9 janvier 2025), qui souligne son importance pour comprendre les arbitrages des ménages face à une perte de revenu. En quantifiant la propension marginale à consommer en période de chômage (0,36) et ses variations selon la liquidité des ménages notamment, cette étude contribue aux débats sur des réformes possibles de l’assurance chômage.

Une tribune d’Olivier Lopez et Pierre Vaysse pour le journal Les Echos

Une tribune d’Olivier Lopez et Pierre Vaysse pour le journal Les Echos

6 janv. 2025

2024 CREST Highlights

As 2024 draws to a close, CREST reflects on a year filled with groundbreaking research, prestigious awards, and impactful initiatives. Here’s a look back at our key achievements.

📊 Research Breakthroughs: 93 Articles Published

CREST published 93 articles so far, with 62% appearing in Q1 journals. These works reflect the breadth and depth of research conducted across CREST’s clusters. Here are some highlights:

Information Technology and Returns to Scale by Danial Lashkari, Arthur Bauer, and Jocely Boussard explores how technological advancements influence economies of scale, shedding light on contemporary production practices, in American Economic Review

Locus of Control and the Preference for Agency by Marco Caliendo, Deborah Cobb-Clark, Juliana Silva-Goncalves, and Arne Uhlendorff investigates how personal traits shape individuals’ economic decisions, providing a deeper understanding of agency in economic behavior, in European Economic Review.

Global Mobile Inventors by Dany Bahar, Prithwiraj Choudhury, Ernest Miguelez, and Sara Signorelli examines the migration patterns of innovative talent worldwide, offering new perspectives on innovation dynamics, in Journal of Development Economics.

Testing and Relaxing the Exclusion Restriction in the Control Function Approach by Xavier D’Haultfoeuille, Stefan Hordelein, and Yuya Sasaki provides advanced methodologies to enhance econometric analysis, in Journal of Econometrics.

Are Economists’ Preferences Psychologists’ Personality Traits? A Structural Approach by Tomas Jagelka bridges economics and psychology, exploring how personality traits influence economic preferences, in Journal of Political Economy.

Autoregressive Conditional Betas by Francisco Blasques, Christian Francq, and Sébastien Laurent provides innovative methods to measure financial risk, critical for investment strategies, in Journal of Econometrics.

Model-based vs. Agnostic Methods for the Prediction of Time-Varying Covariance Matrices by Jean-David Fermanian, Benjamin Poignard, and Panos Xidonas compares methodologies for improving financial predictions under uncertainty, in Annals of Operations Research.

Corporate Debt Value Under Transition Scenario Uncertainty by Theo Le Guedenal and Peter Tankov addresses the valuation of corporate debt amid environmental and regulatory changes, in Mathematical Finance.

Semiparametric Copula Models Applied to the Decomposition of Claim Amounts by Sébastien Farkas and Olivier Lopez develops new actuarial techniques to better understand insurance claims, in Scandinavian Actuarial Journal.

On the Chaotic Expansion for Counting Processes by Caroline Hillairet and Anthony Réveillac advances mathematical models with applications in finance and beyond, in Electronic Journal of Probability.

Russia’s Invasion of Ukraine and Perceived Intergenerational Mobility in Europe by Alexi Gugushvili and Patrick Präg examines how geopolitical shocks affect societal perceptions and mobility, in British Journal of Sociology.

The Total Effect of Social Origins on Educational Attainment: Meta-analysis of Sibling Correlations From 18 Countries by Lewis R. Anderson, Patrick Präg, Evelina T. Akimova, and Christiaan Monden provides a meta-analysis of sibling correlations, offering fresh insights into education and inequality, in Demography.

Context Matters When Evacuating Large Cities: Shifting the Focus from Individual Characteristics to Location and Social Vulnerability by Samuel Rufat, Emeline Comby, Serge Lhomme, and Victor Santoni shifts the focus from individual characteristics to social vulnerabilities during urban evacuations, in Environmental Science and Policy.

Gender Equality for Whom? The Changing College Education Gradients of the Division of Paid Work and Housework Among US Couples, 1968-2019 by Léa Pessin explores shifting dynamics in gendered divisions of labor among U.S. couples over the decades, in Social Forces.

The Augmented Social Scientist: Using Sequential Transfer Learning to Annotate Millions of Texts with Human-Level Accuracy by Salomé Do, Etienne Ollion, and Rubing Shen highlights how AI tools can assist in large-scale sociological research with human-level accuracy, in Sociological Methods and Research.

Investigating Swimming Technical Skills by a Double Partition Clustering of Multivariate Functional Data Allowing for Dimension Selection, by Antoine Bouvet, Salima El Kolei, Matthieu Marbac, in Annals of Applied Statistics.

Full-model estimation for non-parametric multivariate finite mixture models, by Marie Du Roy de Chaumaray, Matthieu Marbac, in Journal of the Royal Statistical Society. Series B: Statistical Methodology

Tail Inverse Regression: Dimension Reduction for Prediction of Extremes, by Anass Aghbalou, François Portier, Anne Sabourin, Chen Zhou, in Bernoulli.

Proxy-analysis of the genetics of cognitive decline in Parkinson’s disease through polygenic scores, by Johann Faouzi, Manuela Tan, Fanny Casse, Suzanne Lesage, Christelle Tesson, Alexis Brice, Graziella Mangone, Louise-Laure Mariani, Hirotaka Iwaki, Olivier Colliot, Lasse Pihlstrom, Jean-Christophe Corvol, in NPJ Parkinson’s Disease.

Benign Overfitting and Adaptive Nonparametric Regression, by Julien Chhor, Suzanne Sigalla, Alexandre Tsybakov, in Probability Theory and Related Fields.

🎯 Discover more CREST publications on our HAL webpage.

🌍 Impactful Events and Conferences

CREST actively participated in and hosted events that fostered collaboration and knowledge exchange:

- European Parliament Panel: Sociologist Paola Tubaro led a pivotal discussion on alternatives to platform-driven gig economies, bringing sociological insights to policy discussions.

- Publication by the National Courts of Audit: Barometer of Fiscal and Social Contributions in France – Second Edition 2023.

- NeurIPS 2024: CREST had a strong presence with 19 papers selected, showcasing cutting-edge work in artificial intelligence and neural information processing.

- Cyber-Risk Conference Cyr2fi: Co-organized with École Polytechnique, this event highlighted the interdisciplinary approaches needed to address growing cybersecurity threats.

- Nobel Prize Lecture: Researchers and PhDs of IP Paris Economics Department celebrated the 2023 Nobel Prize in Economics, reinforcing academic excellence.

📅 Join future events: Visit our calendar.

2024 brought two new chairs at CREST:

- Cyclomob by Marion Leroutier highlights research into sustainable urban mobility, funded through a regional chair.

- Impact Investing Chair by Olivier-David Zerbib to maximize the positive impact of the investment on the environment and society.

🏆 Awards and Recognitions

2024 was a year of accolades for CREST:

- 5 ERC Grants for CREST in 2024: Yves Le Yaouanq has recently joined the 2024 group of ERC grantees, which already includes Samuel Rufat, Olivier Gossner, Julien Combe, and Marion Goussé.

- CNRS Bronze Medal: Clément Malgouyres for contributions to labor economics.

- L’Oréal-UNESCO Young Talent: Solenne Gaucher recognized for her sustainable development work.

- EALE Young Labor Economist Prize: Federica Meluzzi for innovative labor market studies.

- 2024 AEJ Best Paper Awards in Macroeconomics: Giovanni Ricco wins the award for his paper “The transmission of Monetary Policy Shocks” with Silvia Miranda-Agrippino.

- Louis Bachelier Prize: Peter Tankov honored for achievements in mathematical finance.

In 2024, some CREST researchers were also appointed in diverse institutions:

- The Economic Journal: Roland Rathelot was appointed Managing Editor.

- The Econometric Society: Olivier Gossner was named Fellow of the Econometric Society.

- French Ministry of Economics: Franck Malherbet appointed as a member of the Expert Group on the Minimum Growth Wage.

📚 Books and Projects

This year, CREST researchers authored several impactful books:

- Ce qui échappe à l’intelligence artificielle, edited by François Levin and Étienne Ollion, critically examines the limits of AI in understanding human complexity.

- Peut-on être heureux de payer des impôts ? by Pierre Boyer engages readers in a thought-provoking discussion on the role of taxation in society.

- Introduction aux Sciences Économiques, cours de première année à l’Ecole polytechnique by Olivier Gossner et al. serves as an accessible entry point for students into economic principles.

- Une étrange victoire, l’extrême droite contre la politique by Michaël Foessel and Étienne Ollion explores the relationship between politics and far-right ideologies.

2024 was also marked by the second series of the Beyond the PhD series, a series of videos dedicated to the PhD course. In 2024, we were able to explore the evolution of the PhD definition through students currently in different years of their studies in all CREST research clusters.

📣 Media and Outreach

CREST researchers were featured in:

- 80+ media outlets, including Le Monde, Le Nouvel Obs, Les Échos, University World News, BBC News Brazil, France Culture, Libération, Le Cercles des Économistes, Médiapart, AOC…

- 30+ op-eds and articles, shaping public discourse.

🎙️ Featured Interview: Pauline Rossi discusses economic inequalities in Le Cercle des Économistes. Listen here.

CREST celebrates a year of remarkable achievements and meaningful contributions to research, society, and global conversations. From groundbreaking publications to prestigious awards and impactful events, our community has continued to push boundaries and inspire innovation.

Looking ahead to 2025, we remain committed to fostering interdisciplinary research, addressing societal challenges, and nurturing a collaborative environment for researchers and students.

Portrait vidéo de Clément Malgouyres, chargé de recherche CNRS au CREST et médaille de bronze du CNRS

Portrait vidéo de Clément Malgouyres, chargé de recherche CNRS au CREST et médaille de bronze du CNRS

Tribune | Comment financer la défense nationale ?

Une tribune de Pierre Boyer et Michel Bouvier pour le journal Les Echos.

Publié le 18 déc. 2024

Le travail humain invisible de l’automatisation dans l’IA

Une interview de Paola Tubaro pour le journal suisse Le Temps

16 décembre 2024

Qu’est-ce que faire un « beau » mariage ?

Marion Goussé, économiste et professeure à l’ENSAI était l’invitée de l’émission “Entendez-vous l’éco ?” sur France Culture.

Publié le lundi 16 décembre 2024

Qu’est-ce qu’une licorne ?

Une tribune de Pierre Rousseaux pour Citéco.

13/12/2024

Création d’un MOOC sur la finance durable avec Olivier-David Zerbib

Pour la première fois, l’Institut Louis Bachelier, l’Institut de la Finance Durable et le fond de dotation Horizon & Beyond s’associent dans la création d’un MOOC sur la finance durable.

Le MOOC de la finance durable répond à plusieurs problématiques actuelles, telles que les enjeux de financement de la transition en passant par les débats autour du Green Deal lors des récentes élections européennes. Il a pour vocation de couvrir l’ensemble de l’écosystème de la finance durable grâce à des présentations assurées par un panel d’intervenants de divers horizons. Ce cours s’adresse aussi bien aux étudiants qu’aux professionnels et décideurs politiques, afin de répondre à leurs attentes spécifiques.

![]() Qu’est-ce que c’est ?

Qu’est-ce que c’est ?

- Un outil de formation en ligne gratuit et ouvert à tous avec l’ambition de devenir une référence sur le marché

- Un projet élaboré à partir d’une base de connaissances fondée sur une approche scientifique

- En partenariat avec Fondation Horizon & Beyond, l’Institut de Finance Durable, l’Institut Louis Bachelier, leurs membres et des représentants de masters spécialisés

![]() Ce qu’il offre ?

Ce qu’il offre ?

- Une référence au format média, indépendante, œcuménique et applicable

- Un outil de référence pour la formation continue

- Plusieurs niveaux d’expertise

- Adapté à la formation initiale

- Une composante de formation sur des sujets actuels et non stabilisés.

![]() Quelques concepts clés que vous découvrirez dans ce MOOC !

Quelques concepts clés que vous découvrirez dans ce MOOC !

- Critères sociaux, environnementaux et de gouvernance

- Double matérialité

- Approches extra-financières

- Taxonomie et réglementation

- Produits financiers responsables